![]() If you’re physically attractive, the world simply treats you better. You’re more trusted. People think you’re competent. You have more freedom to act as you please. The list goes on. This reflects something called the physical attractiveness stereotype – people lacking any other information tend to believe that beautiful people have traits that they (or their culture) find attractive. This is both commonsense and an empirically supported finding. No one will argue – the pretty people have it all (even if they don’t deserve it!).

If you’re physically attractive, the world simply treats you better. You’re more trusted. People think you’re competent. You have more freedom to act as you please. The list goes on. This reflects something called the physical attractiveness stereotype – people lacking any other information tend to believe that beautiful people have traits that they (or their culture) find attractive. This is both commonsense and an empirically supported finding. No one will argue – the pretty people have it all (even if they don’t deserve it!).

But what about virtual attractiveness? In an online virtual world, people have complete control over the appearance of their avatars. They can look however they want; I choose a tall man in a snappy black suit with a pretty slick haircut. Do people react to the attractiveness of virtual people the same way they react to real people?

But what about virtual attractiveness? In an online virtual world, people have complete control over the appearance of their avatars. They can look however they want; I choose a tall man in a snappy black suit with a pretty slick haircut. Do people react to the attractiveness of virtual people the same way they react to real people?

A recent study by Banakou and Chorianopoulos1 in the fascinating Journal of Virtual Worlds Research (PDF freely available here) examines the effects of both attractiveness and gender in Second Life, the most popular persistent online virtual world. The overall verdict? Yes – and more. Not only does attractiveness change how people treat you, but it also seems to change the way you behave. Attractiveness (and gender) have an effect on the way that virtual interactions occur on both sides. If you’re attractive, you’re not only more likely to be able to strike up a conversation with a virtual stranger but more likely to make the effort in the first place.

The researchers examined this by creating four avatars, crossing gender and attractiveness: attractive male, unattractive male, attractive female, unattractive female. Nine participants (four females and five males) were deceived into believing they were participating in a study on how chat works in virtual environments. Using a within-subjects design, each participant was placed in a location with lots of active users and asked to strike up conversations with strangers, with a total of 205 individual attempted interactions.

Several interesting findings came up:

- More SL users tried to strike up a conversation with the research participants when they were using the attractive avatar.

- Research participants tried to start more conversations when they were using the attractive avatar.

- Of SL users initiating private chats with research participants, 21% chatting with an attractive avatar tried to pursue friendship beyond the single interaction, while roughly 3% tried with an unattractive avatar.

- Female avatars have a slightly higher success rate in general (surprise, surprise).

- Female research participants tended to seek out visibly male SL users more often than visible female SL users when they were in an attractive avatar. The same effect was present when in the unattractive avatar, but the effect was much less pronounced. Male participants tended to talk to visible female SL users regardless of the attractiveness level of their avatar.

Before internalizing these findings, there are several caveats. The most problematic is this: since there were only nine participants, it is quite probable that there are some sample-specific effects going on. Without more data, there’s no way to know precisely what those effects might be, however. The conclusion about female-specific behavior above (#5) is technically based on N=4.

The second is the representation of the construct “attractiveness.” Here are the four avatars themselves:

![]() “Attractiveness” in psychology is a complex concept. Clothing, body shape (silhouette), facial features, symmetry – these are all aspects of attractiveness. It’s hard to say in this study precisely what aspect of attractiveness elicited the effect. Additionally, because default Second Life avatars were used as the “unattractive” option (i.e. an avatar automatically assigned to a person when signing up), there may be other evaluations going on in the minds of the SL folks. Perhaps they see a default avatar and assume this is not a “serious” SL user and thus not worth their time – not an effect of attractiveness at all. But this does not explain differences in study participant behavior.

“Attractiveness” in psychology is a complex concept. Clothing, body shape (silhouette), facial features, symmetry – these are all aspects of attractiveness. It’s hard to say in this study precisely what aspect of attractiveness elicited the effect. Additionally, because default Second Life avatars were used as the “unattractive” option (i.e. an avatar automatically assigned to a person when signing up), there may be other evaluations going on in the minds of the SL folks. Perhaps they see a default avatar and assume this is not a “serious” SL user and thus not worth their time – not an effect of attractiveness at all. But this does not explain differences in study participant behavior.

Regardless, this study creates several provocative questions about virtual interactions and confirms, at the very least, that your virtual appearance changes the way that people interact with you and furthermore, the way you interact with them. This has important implications for virtual worlds research moving forward – assignment of avatars to participants cannot be done casually, as evidence suggests here that it may influence participant (trainee) behavior.

- Banakou, D. & Chorianopoulos, K. (2010). The effects of avatars’ gender and appearance on social behavior in virtual worlds. Journal of Virtual Worlds Research, 2. (5) Other: https://journals.tdl.org/jvwr/article/view/779 [↩]

When I teach undergraduate statistics, I often include an assignment where I ask my students to take a specific chart or figure found in the news media and explain it rationally. Where did the numbers come from? What does it really mean? How much trust can we put in the accuracy of this figure? Is this figure deliberately trying to mislead you?

The especially sad part of this is that finding misleading figures for the assignment is not very difficult. The news media’s goal is to intrigue and entertain, and that often means that accuracy falls by the wayside.

Today, one such figure jumped out at me. It’s not only from the news media, but it’s also been helpfully augmented by a reader to provide a social message:

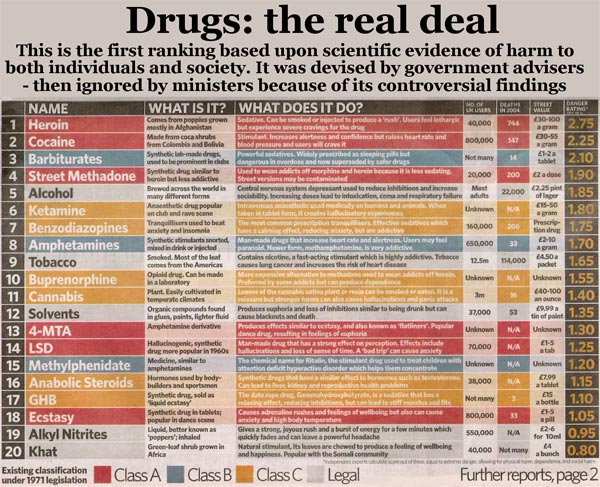

I don’t necessarily disagree with the content of this chart, but with the silly way it’s presented in the graphic. The top says, “this is the first ranking based upon scientific evidence.” Well, maybe.

The very bottom of the chart gives some fine-print details. If you can’t see it above, click on the chart for a larger version, or read it here: “independent experts calculate score out of three: equal to extreme danger, allowing for physical harm, dependence, and social harm.” That provides little direction, and the poor grammar doesn’t help! What follows are the thoughts I have over the next 5-10 seconds after looking at this chart.

- We have no information on how many experts there are or where they came from. This could be anyone! The graph implicitly asks for us to trust the expertise of the people making the ratings, yet no credentials are provided.

- “equal to extreme danger, allowing for physical harm, dependence, and social harm” is vague, but it appears that extreme danger is the overall score, while physical harm, dependence, and social harm are the three dimensions of extreme danger. That’s a starting point for understanding what this table really tells us.

- We can try to make some educated guesses as to where the specific numbers came from. Since the scale of the “danger rating” is out of 3 (also written in very small print in the table header), the apparently three dimensions were likely three scores on a scale of 0 to 1 (or perhaps 0% to 100%), combined as a simple mean. Is that the best idea? That’s hard to say. Is social harm as important as physical harm? Is dependence as destructive as social harm? These are potentially assumptions made in this graph, which not everyone will agree with.

- There are not very many fine shades of deviation in the scale – everything is a multiple of 0.05. It’s not conclusive, but it strongly suggests that 20 values (or a multiple of 20v values) are going into these ratings. Why? Because 1/20 = 0.05. Combined with the mean-of-3-values theory above, this doesn’t make sense. If 5 people were providing 3 ratings each, our smallest distinction would be 1/(3*5) = 1/15 = .067. For 6 people making 3 ratings, 1/(6*3) = 1/18 = .056. For 7 people making 3 ratings, 1/(7*3) = 1/21 = .048. There is no combination of values, given a mean of 3 ratings, that would produce a difference of .05 exactly. So something’s off here.

- Some explanation may be provided by this observation – a huge group in the middle of the list (from #3 Barbiturates to #19 Alkyl Nitrates) differ by a small range – 0.05 or 0.10. And yet no two entries are the same number! That’s extremely suspicious – with such fine shades of deviation and what appears to be a small group of raters, we’d expect at least a little overlap. That suggests that either 1) this list was modified by the editor after it was formed or 2) the “experts” came to some sort of group agreement, casting even more doubt on the impartiality of the results.

- I generally don’t trust anything where the word “scientific evidence” is thrown around so casually. It’s not scientific because you say it is.

I will point out, however, that none of these points are conclusive. They are just the initial reactions I have to a poorly made graphic. These numbers may be valid, but we simply don’t have enough information to trust them.

What could have been done to improve the graphic? Easy – provide details on the methodology. Only a sentence or two might be required, even in another tiny footnote. But as it stands, this graphic practically tells us nothing.

![]()

![]() I’ll go ahead and admit it – I love general-audience articles by Frank Schmidt. If you aren’t familiar with him, he is one of the masterminds behind Hunter-Schmidt psychometric meta-analysis, one of the most widely adapted methods to summarize research results across studies in OBHRM and psychology. I love his general-audience articles because virtually all of them use straightforward examples of how data in the wrong hands can mislead. Frankly, they remind me of a more technical version of Ben Goldacre’s fantastic blog, Bad Science.

I’ll go ahead and admit it – I love general-audience articles by Frank Schmidt. If you aren’t familiar with him, he is one of the masterminds behind Hunter-Schmidt psychometric meta-analysis, one of the most widely adapted methods to summarize research results across studies in OBHRM and psychology. I love his general-audience articles because virtually all of them use straightforward examples of how data in the wrong hands can mislead. Frankly, they remind me of a more technical version of Ben Goldacre’s fantastic blog, Bad Science.

His most recent piece, “Detecting and Correcting the Lies That Data Tell” (the full text of which is available here)1, is no exception. Appearing in Psychological Science, it details an example of where statistical significance testing can lead you astray. In his example, he presents 20 correlations ranging from .02 to .39 found in the research literature comparing decision making test scores and supervisory ratings of job performance. Half are statistically significant and half are not. From this, he claims there are three typical conclusions:

- The Moderator Interpretation

There is no relationship in half of the organizations, but there is a relationship in the other half. - The Majority Rule

Because half are significant and half are not, err on the side of caution and conclude there is no relationship between the two (if one side had a majority of “significances,” go with that). - Face-Value

Accept the correlations as they are – there is variation but the relationship is positive.

Of these three, #1 and #2 are the most common, despite the fact that all three are incorrect (at least in this case).

To show how this could be, Schmidt produces a theoretical distribution of sample-level statistics as one might extract from those 20 values. Here, he finds a mean of r = .20 with SD = .08. But this is not the whole story – that .08 actually represents two pieces of information combined:

- Actual variation in population correlations

- Variation due to sampling error, the tendency for sample statistics to vary randomly (and predictably) around a population value, and to do so more substantially when sample sizes are small

After applying statistical corrections, it becomes clear that the observed SD is biased heavily by sampling error. The true SD is only .01, meaning that variability in test scores accounts for just .01% of the variability in supervisory ratings! That’s a very consistent test! The variation observed in those initial 20 correlations was due to nothing more than random chance – luck. A science should not be built on luck.

Schmidt goes on to demonstrate that the observed correlation (.2) is also downward biased due to measurement error, i.e. unreliability. Correlations are predictably biased downwards when imperfect measurement is used.

Consider this example: two supervisors rate the job performance level of a single employee, and their ratings don’t agree. Why not? The reasons are myriad: they observe different aspects of the employee’s job performance, they have personal characteristics (agreeableness, for example) that vary, they have differing interpersonal relationships with their employees, etc. But does that mean that the actual job performance level of the employee is different for each supervisor? Probably not – the employee is performing at a specific level, and the two supervisors are simply interpreting this performance level differently. This is criterion-related unreliability.

The problem is that just because the supervisors can’t agree as to the performance level of an employee doesn’t mean that the employee’s job performance level is not consistent. When this artificial inconsistency is introduced, correlations based upon those unreliable scores will be biased downward, producing misleading results (lies!). But since unreliability affects correlations in a predictable fashion, we can apply statistical corrections to compute the real correlation – the correlation we would have found if we measured actual job performance rather than supervisors’ views of it. This corrected correlation was .32.

That’s substantial! We’ve found that this decision making test predicts 6.24% (.32 * .32 – .20 * .20) more variance in performance than we originally thought – that’s 256% our original amount!

Schmidt thus demonstrates the importance of a complete understanding of statistical significance testing to advancing organizational science – not only from an academic perspective, but from an applied perspective as well. It is quite easy to mislead yourself when constraining your research to a single organization – much care must be taken in interpretation, lest poor organizational decisions be made.

- Schmidt, F. (2010). Detecting and correcting the lies that data tell. Perspectives on Psychological Science, 5, 233-242. DOI: 10.1177/1745691610369339 [↩]