![]()

![]() In a recent article in the Journal of Computer Assisted Learning, Junco, Heiberger and Loken1 examine the value of Twitter for improving student engagement and grades. Junco et al. concluded that student engagement and semester GPAs were both improved through the addition of Twitter to a course.

In a recent article in the Journal of Computer Assisted Learning, Junco, Heiberger and Loken1 examine the value of Twitter for improving student engagement and grades. Junco et al. concluded that student engagement and semester GPAs were both improved through the addition of Twitter to a course.

The study design was somewhat peculiar. Seven sections of a one-credit first-year pre-health seminar course formed the setting for the study. Four of these sections were randomly assigned as the experimental group (74 students) and three sections were assigned to the control group (58 students). Students in the experimental group used Twitter as part of their class, while the control group did not. All sections used Ning instead of a standard LMS like Blackboard/WebCT/Moodle. As students were not randomly assigned to conditions, the present study is a quasi-experiment.

Student composition is an important issue to explore here, and the specifics of this sample may have contributed to Junco et al.’s results. 98% of the sample was between 18 and 19 years old, 60% were female, 91% were white, and 72% had at least one parent with a college degree. This creates a picture of a very specific kind of student – and perhaps a type that would be more receptive to a Twitter-enhanced course.

So how was Twitter actually used? In the second week of the course, students received an hour-long training session on how to use Twitter – how they filled an hour, I have no idea, although students were required to send an introductory tweet during this session. Q&A was then held over the next few weeks during class. Twitter was then used for at least 11 purposes:

- Continuing class discussions online

- Providing a low-stress way to ask questions

- Discussing a required book

- Providing course-related reminders

- Providing campus-related reminders

- Providing information about campus events and resources (e.g. information on the campus tutoring center)

- Helping students connect in the context of the course

- Organizing service learning

- Organizing study groups

- Posting optional assignments (which required Twitter)

- Posting required assignments (which also required Twitter)

Here, we encounter a common problem with technology studies: there is no way to disentangle the effect of 1) providing additional learning opportunities in general and 2) using the technology. These students got extra assignments, extra attention, and so on. How do we know it was Twitter and not the additional opportunities provided that produced any observed results?

The results themselves were small but statistically significant. The researchers used a mixed effects ANOVA with sections nested within condition to try to account for course section being confounded with treatment condition (remember, this is a quasi-experiment). They found statistically significantly higher difference scores for the experimental group than the control group, indicating that engagement increased to a greater extent for the Twitter users than the non-Twitter users.

Differences in “semester GPA” were also found. This may refer to overall semester GPA or just semester GPA for the course in question – this is not made clear. But again, differences were found – 2.28 vs. 2.79. The researchers also examined this with high school GPA as the dependent variable to provide some evidence against bias introduced by quasi-experimentation. No statistically significant difference was found, somewhat supporting this assertion.

There is little information given on how the instructors of these courses accounted for experimenter bias, and the researchers admit this makes interpretation tricky. The use of Twitter forced the instructors themselves to engage to a much greater degree than they did for the control group, which likely affected student perception, participation, and results. To what extent Twitter helps students more than it motivates instructors engage with students is left to future research.

- Junco, R., Heiberger, G., & Loken, E. (2011). The effect of Twitter on college student engagement and grades Journal of Computer Assisted Learning, 27 (2), 119-132 DOI: 10.1111/j.1365-2729.2010.00387.x [↩]

![]() Recent research by Tokunaga1 in Cyberpsychology, Behavior and Social Networking derives ten categories of bad experiences that people have on online social networks. Here they are, in descending order of how commonly they were reported:

Recent research by Tokunaga1 in Cyberpsychology, Behavior and Social Networking derives ten categories of bad experiences that people have on online social networks. Here they are, in descending order of how commonly they were reported:

- The person initiates a friend request which is denied or ignored by the person he sends it to.

- The person tags a photo or leaves a message on a friend’s profile and later discovers it has been deleted.

- The person visits a friend’s profile and discovers he is not ranked where he thinks he should be ranked on a “Top Friends” list.

- The person learns that someone else has been “stalking” their profile.

- The person waits longer than he expected for a response to a post.

- The person discovers negative (e.g. flaming) comments on his profile, left by other people.

- The person discovers someone else has written something about him that he did not know about – interestingly, the information does not need to be negative to be unwanted.

- The person discovers that even though a friend request has been accepted, he has limited access to the new friend’s profile.

- The person was removed as a friend.

- The person discovers a group he wants to join but is not permitted to; alternately, the person discovers a group has been made about him without his permission.

To get this list, the researcher surveyed 197 undergraduates were asked to describe situations when they had used social network sites where they had a bad experience; the average response was about 75 words. A coding system was then used to pare the qualitative data down into the categories reported here.

This research has all the typical characteristics of qualitative data; fascinating but not terribly useful. We don’t, for example, know if the psychological experience of any of these is worse than any other. Personally, I’d guess that being defriended is uncommon but more troubling than many of the others. We don’t know which social network sites most of the students were talking about, but we can guess most are about Facebook. We also don’t know if any of these events are actually more common than any others – only that these events are what the undergraduates surveys most immediately thought of when given an open-ended question.

But at the least, this research provides an excellent starting point to understand the potential negative consequences of social media – and perhaps more interestingly, how to avoid or minimize these consequences. Clearly, social media is not all roses and sunshine.

- Tokunaga, R. (2011). Friend me or you’ll strain us: Understanding negative events that occur over social networking sites. Cyberpsychology, Behavior, and Social Networking DOI: 10.1089/cyber.2010.0140 [↩]

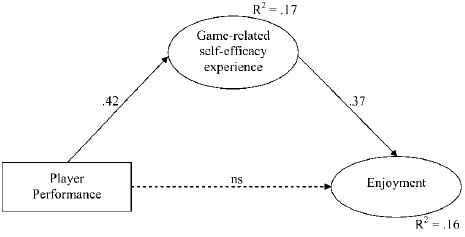

![]() In a recent article in Cyberpsychology, Behavior & Social Networking, Trepte and Reinecke1 explore one potential mediator of the relationship between player performance and game enjoyment: self-efficacy. If players succeeded at the game (played well), they only enjoyed the game if they felt that they were responsible for that success.

In a recent article in Cyberpsychology, Behavior & Social Networking, Trepte and Reinecke1 explore one potential mediator of the relationship between player performance and game enjoyment: self-efficacy. If players succeeded at the game (played well), they only enjoyed the game if they felt that they were responsible for that success.

To examine this issue, the researchers had 213 research participants play Crazy Chicken: Heart of Tibet, a platformer by Sproing Interactive Media. They played this for 30 minutes, after which they completed several survey measures.

Three variables were used in their analyses:

- Player performance was captured by adding the frequencies of several key game “events,” which included collecting various game items, reaching new levels, activating saves, and so on.

- Self-efficacy, which is the extent to which players believe they are able to succeed at the game, was captured with a previously researched 11-item scale with items like, “I had the impression that I could immediately affect things on the screen.”

- Game enjoyment was captured with a 5-item scale developed for this study (risky!), with items like, “Playing the game was fun.”

Their primary hypothesis was that the relationship between player performance and game enjoyment was mediated by self-efficacy; in other words, in order for player performance to affect enjoyment, it needed to affect self-efficacy first.

So what does that mean, exactly? It means that just because a player succeeds at a game, they’re not necessarily going to enjoy that game. For example, if the game is very easy such that they never feel challenged, even if they are playing extremely well, they are less likely to enjoy the game. If they have self-efficacy experiences (e.g. being challenged and overcoming those challenges), they are more likely to enjoy the game. This is reminiscent of another study I reported on recently on the cognitive state of confusion leading to engagement in learning games.

Unfortunately, there are weaknesses to this study. It relies on structural equation modeling (SEM; a complex statistical procedure allowing for the estimation of multiple relationships simultaneously, which is generally a good thing) but doesn’t really use it convincingly. SEM, you see, is easy to manipulate. Reporting a single set of model fit statistics doesn’t really tell you much about whether your theory makes sense because finding fit is not all that difficult. To identify a model with good fit, one wants high CFIs and low RMSEAs, but high CFI and low RMSEA is almost the standard state of affairs. If your model even sort of makes sense, you’ll find “acceptable” fit statistics.

Instead, the real power of SEM is model comparison. When you have two alternate ways of looking at the same data and want to see which fits better, you are unleashing the real power of SEM. What would have been more helpful here would have been to examined other competing hypotheses. For example, perhaps the relationship between self-efficacy and enjoyment are mediated by player performance, i.e. instead of what the researchers reported, perhaps experiences of self-efficacy lead to enjoyment, but only if that high self-efficacy leads to higher performance. There is prior research supporting both of these models, but the researchers only tested one of these; that’s a shame, since they have the data to have tested both. Determining which approach fit better would be much more informative.

Self-efficacy is also operationalized a little oddly here. Generally when we think of self-efficacy, we think of self-efficacy for success – answers to the question, “If I were to play this game, would I succeed?” Here, the researchers are asking, “If I were to play this game, would my actions lead to improved game performance?” This is actually closer to the definition of expectancy, part of Vroom’s VIE theory of motivation.

Regardless, this study does provide evidence that consideration of internal motivational processes is important in the development of games (and by extension, learning games). Modeling these complexities explicitly will be useful for better explaining player behavior.

- Trepte, S., & Reinecke, L. (2011). The pleasures of success: Game-related efficacy experiences as a mediator between player performance and game enjoyment. Cyberpsychology, Behavior, and Social Networking DOI: 10.1089/cyber.2010.0358 [↩]