![]() One of the questions faced by survey designers is presentation order. Does it matter if I put the demographics first? Should I put the cognitive items up front because they require more attention? If I put 500 personality items in a row, will anyone actually complete this thing? Some recent research in the Journal of Business and Psychology reveals that placing demographic items at the beginning of a survey increases the response rate to those items in comparison to demographic items placed at the end. And more importantly, it did not affect scores on the three noncognitive measures that came afterward, in this case: leadership, conflict resolution, and culture and goals measures.

One of the questions faced by survey designers is presentation order. Does it matter if I put the demographics first? Should I put the cognitive items up front because they require more attention? If I put 500 personality items in a row, will anyone actually complete this thing? Some recent research in the Journal of Business and Psychology reveals that placing demographic items at the beginning of a survey increases the response rate to those items in comparison to demographic items placed at the end. And more importantly, it did not affect scores on the three noncognitive measures that came afterward, in this case: leadership, conflict resolution, and culture and goals measures.

To investigate this, Teclaw, Price and Osatuke1 conducted a large survey (roughly N = 75000) on behalf of the Veterans Health Administration. Respondents were randomly assigned to one of three surveys. Of those randomly assigned to the third survey, participants received one of seven scales, three of which were those listed above, resulting in a sample size for this study of N = 4508. Respondents completing each of these three surveys were in turn randomly assigned to either complete demographic items at the beginning of end of their survey. The authors compared response rates, considering both skipped items and “Don’t Know” responses to be a lack of response, and included all respondents that opened the survey regardless of how many questions were actually completed.

Response rates were indeed different. On the first of the three focal surveys, the response rate to demographics placed at the beginning of the survey was around 97%, while the response rate to demographics placed at the end was around 87%. While this isn’t a huge difference, if demographics are involved in your primary research questions (and they often are), then this may be a good idea.

What’s especially interesting about this is that conventional wisdom is to place demographic items at the end. The argument that I have most often heard is that priming your survey respondents with their demographic characteristics (e.g. race) will lead them to respond differently than they otherwise would have. This is especially salient in the context of race-based stereotype threat, the tendency for minority group performance on cognitive measures to decrease as a result of anxiety associated with confirming negative stereotypes about intelligence. So what should we do?

There are two important facts about this study that limit its applicability. First, all measures investigated were noncognitive, i.e. survey items. Stereotype threat typically applies in contexts where there is a “right answer,” for example, knowledge tests or intelligence tests. So the placement on demographic items on such measures may still be important. Second, the study did not control for cognitive fatigue – survey length was confounded with experimental condition. Is it because the survey items were at the beginning vs. the end, or was it simply because respondents had already responded to many, many items and were bored/tired/at a loss for time/etc? Would the effect still hold with a 20-item survey? A 50-item survey? We don’t really know.

If you’re giving a noncognitve voluntary survey, you are probably interested in demographics specifically and want to ensure they are responded to more so than any other items. For now, it appears to be safe to put demographic items up front if that is your goal. Whether your survey is 20 items or 200 items, it is a low cost to move the demographic items on your survey. But if your survey has cognitively-loaded items, I’d still recommend against it.

- Teclaw, R., Price, M., & Osatuke, K. (2011). Demographic Question Placement: Effect on Item Response Rates and Means of a Veterans Health Administration Survey Journal of Business and Psychology DOI: 10.1007/s10869-011-9249-y [↩]

![]() One of the biggest challenges associated with this newfangled social media is demonstrating monetary return on investment (ROI). A properly run social media campaign can be very expensive, as it takes a lot of time to properly engage an audience. Up to this point, there has been little to link social media to ROI other than an intuitive sense from practitioners of “of course it must have value!” Fortunately, a new research study soon to be published in the Journal of Business Research finally ties social media marketing to some more tangible outcomes. Customers with better perceptions of social media marketing are more likely to purchase the brands represented there.

One of the biggest challenges associated with this newfangled social media is demonstrating monetary return on investment (ROI). A properly run social media campaign can be very expensive, as it takes a lot of time to properly engage an audience. Up to this point, there has been little to link social media to ROI other than an intuitive sense from practitioners of “of course it must have value!” Fortunately, a new research study soon to be published in the Journal of Business Research finally ties social media marketing to some more tangible outcomes. Customers with better perceptions of social media marketing are more likely to purchase the brands represented there.

In their study, Kim and Ko1 first developed a list of luxury fashion brands to be the focus of the study. They did this by asking a team of fifteen graduate students to list three brands “that came to mind when thinking of luxury.” The list ultimately consisted of: Louis Vuitton, Gucci, Burberry and Dolce & Gabbana, and from this list, Louis Vuitton was chosen to be the focus of the study. This made sense because the next phase of the study would be conducted in Korea, and this was a high-profile brand there with a strong social media presence.

Next, researchers staked out malls in luxury shopping districts in Seoul, where they would intercept shoppers and provide them with a survey. 400 surveys were collected, 362 of which contained complete data, which asked questions about shopper perceptions of Louis Vuitton’s social media presence, and their perceptions of the Louis Vuitton brand. This enabled researchers to examine how beliefs about social media were related to brand perceptions. Study participants were shown a picture of Louis Vuitton’s Facebook page and Twitter feeds before responding to questionnaires about them.

Using structural equations modeling, the authors demonstrated that there were three mediators of social media marketing perceptions and purchase intentions/customer equity. If you aren’t familiar with mediation, the basic idea is that A affects B only through its effect on C. For example, eating candy does not itself make one happy – instead, it’s because candy is delicious that it makes one happy. Thus, candy creates deliciousness creates happiness. We would conclude from this that the relationship between candy and happiness is mediated by deliciousness.

Here are the three mediators tested:

- Value Equity. This is a customer’s assessment of how “worth it” the product is. If it’s priced well for a good product, you have very high value equity. If it’s priced badly for a poor product, you have very low value equity.

- Relationship Equity. This is a customer’s assessment of how loyal they are to a brand.

- Brand Equity. This a customer’s assessment of the added value of the brand beyond the product itself; for example, a customer with high brand equity would perceive a Louis Vuitton product to be of greater value than an identical product from another brand.

Researchers looked at two outcomes:

- Purchase Intentions. Hopefully this one’s pretty obvious. However, the scope of this was not clear from the article – intentions within the next month, six months, year?

- Customer Equity. This is a customer’s assessment of their expected lifetime value with a brand, a combination of expected total purchases, purchase frequency, purchase volume, expected purchases over other brands, and a few other features.

The researchers found a relationship between perceptions of social media marketing and all three mediators, with the strongest relationships to brand equity and relationship equity. But the relationships between mediators and outcomes was more complex: only value equity and brand equity predicted purchase intentions, while only brand equity predicted customer equity.

Overall, we can conclude that customer perceptions of social media marketing are linked to purchase intentions through their effect on value equity. Or in other words, the better a customer perceives your social media marketing effort, the more likely they are to think your products give them more for their money, and the more likely they are to actually purchase something from you.

There are certainly some limitations. First, the study is limited to the variables chosen; there may be other mediators not examined that could affect outcomes. Second, actual behavioral outcomes (e.g. purchases) were not measured; we are relying on self-report of purchase intentions. Third, and most importantly, as a survey-based study, we can make no causal conclusions. So we cannot safely say “if you increase your social media marketing efforts, more people will intend to purchase your products.” That is left to future research. But even with these limitations, this marks the first explicit tying of social media efforts to measurable cash-related outcomes.

- Kim, A., & Ko, E. (2011). Do social media marketing activities enhance customer equity? An empirical study of luxury fashion brand Journal of Business Research DOI: 10.1016/j.jbusres.2011.10.014 [↩]

Computing Intraclass Correlations (ICC) as Estimates of Interrater Reliability in SPSS

If you think my writing about statistics is clear below, consider my student-centered, practical and concise Step-by-Step Introduction to Statistics for Business for your undergraduate classes, available now from SAGE. Social scientists of all sorts will appreciate the ordinary, approachable language and practical value – each chapter starts with and discusses a young small business owner facing a problem solvable with statistics, a problem solved by the end of the chapter with the statistical kung-fu gained.

If you think my writing about statistics is clear below, consider my student-centered, practical and concise Step-by-Step Introduction to Statistics for Business for your undergraduate classes, available now from SAGE. Social scientists of all sorts will appreciate the ordinary, approachable language and practical value – each chapter starts with and discusses a young small business owner facing a problem solvable with statistics, a problem solved by the end of the chapter with the statistical kung-fu gained.

This article has been published in the Winnower. You can cite it as:

Landers, R.N. (2015). Computing intraclass correlations (ICC) as estimates of interrater reliability in SPSS. The Winnower 2:e143518.81744. DOI: 10.15200/winn.143518.81744

You can also download the published version as a PDF by clicking here.

![]() Recently, a colleague of mine asked for some advice on how to compute interrater reliability for a coding task, and I discovered that there aren’t many resources online written in an easy-to-understand format – most either 1) go in depth about formulas and computation or 2) go in depth about SPSS without giving many specific reasons for why you’d make several important decisions. The primary resource available is a 1979 paper by Shrout and Fleiss1, which is quite dense. So I am taking a stab at providing a comprehensive but easier-to-understand resource.

Recently, a colleague of mine asked for some advice on how to compute interrater reliability for a coding task, and I discovered that there aren’t many resources online written in an easy-to-understand format – most either 1) go in depth about formulas and computation or 2) go in depth about SPSS without giving many specific reasons for why you’d make several important decisions. The primary resource available is a 1979 paper by Shrout and Fleiss1, which is quite dense. So I am taking a stab at providing a comprehensive but easier-to-understand resource.

Reliability, generally, is the proportion of “real” information about a construct of interest captured by your measurement of it. For example, if someone reported the reliability of their measure was .8, you could conclude that 80% of the variability in the scores captured by that measure represented the construct, and 20% represented random variation. The more uniform your measurement, the higher reliability will be.

In the social sciences, we often have research participants complete surveys, in which case you don’t need ICCs – you would more typically use coefficient alpha. But when you have research participants provide something about themselves from which you need to extract data, your measurement becomes what you get from that extraction. For example, in one of my lab’s current studies, we are collecting copies of Facebook profiles from research participants, after which a team of lab assistants looks them over and makes ratings based upon their content. This process is called coding. Because the research assistants are creating the data, their ratings are my scale – not the original data. Which means they 1) make mistakes and 2) vary in their ability to make those ratings. An estimate of interrater reliability will tell me what proportion of their ratings is “real”, i.e. represents an underlying construct (or potentially a combination of constructs – there is no way to know from reliability alone – all you can conclude is that you are measuring something consistently).

An intraclass correlation (ICC) can be a useful estimate of inter-rater reliability on quantitative data because it is highly flexible. A Pearson correlation can be a valid estimator of interrater reliability, but only when you have meaningful pairings between two and only two raters. What if you have more? What if your raters differ by ratee? This is where ICC comes in (note that if you have qualitative data, e.g. categorical data or ranks, you would not use ICC).

Unfortunately, this flexibility makes ICC a little more complicated than many estimators of reliability. While you can often just throw items into SPSS to compute a coefficient alpha on a scale measure, there are several additional questions one must ask when computing an ICC, and one restriction. The restriction is straightforward: you must have the same number of ratings for every case rated. The questions are more complicated, and their answers are based upon how you identified your raters, and what you ultimately want to do with your reliability estimate. Here are the first two questions:

- Do you have consistent raters for all ratees? For example, do the exact same 8 raters make ratings on every ratee?

- Do you have a sample or population of raters?

If your answer to Question 1 is no, you need ICC(1). In SPSS, this is called “One-Way Random.” In coding tasks, this is uncommon, since you can typically control the number of raters fairly carefully. It is most useful with massively large coding tasks. For example, if you had 2000 ratings to make, you might assign your 10 research assistants to make 400 ratings each – each research assistant makes ratings on 2 ratees (you always have 2 ratings per case), but you counterbalance them so that a random two raters make ratings on each subject. It’s called “One-Way Random” because 1) it makes no effort to disentangle the effects of the rater and ratee (i.e. one effect) and 2) it assumes these ratings are randomly drawn from a larger populations (i.e. a random effects model). ICC(1) will always be the smallest of the ICCs.

If your answer to Question 1 is yes and your answer to Question 2 is “sample”, you need ICC(2). In SPSS, this is called “Two-Way Random.” Unlike ICC(1), this ICC assumes that the variance of the raters is only adding noise to the estimate of the ratees, and that mean rater error = 0. Or in other words, while a particular rater might rate Ratee 1 high and Ratee 2 low, it should all even out across many raters. Like ICC(1), it assumes a random effects model for raters, but it explicitly models this effect – you can sort of think of it like “controlling for rater effects” when producing an estimate of reliability. If you have the same raters for each case, this is generally the model to go with. This will always be larger than ICC(1) and is represented in SPSS as “Two-Way Random” because 1) it models both an effect of rater and of ratee (i.e. two effects) and 2) assumes both are drawn randomly from larger populations (i.e. a random effects model).

If your answer to Question 1 is yes and your answer to Question 2 is “population”, you need ICC(3). In SPSS, this is called “Two-Way Mixed.” This ICC makes the same assumptions as ICC(2), but instead of treating rater effects as random, it treats them as fixed. This means that the raters in your task are the only raters anyone would be interested in. This is uncommon in coding, because theoretically your research assistants are only a few of an unlimited number of people that could make these ratings. This means ICC(3) will also always be larger than ICC(1) and typically larger than ICC(2), and is represented in SPSS as “Two-Way Mixed” because 1) it models both an effect of rater and of ratee (i.e. two effects) and 2) assumes a random effect of ratee but a fixed effect of rater (i.e. a mixed effects model).

After you’ve determined which kind of ICC you need, there is a second decision to be made: are you interested in the reliability of a single rater, or of their mean? If you’re coding for research, you’re probably going to use the mean rating. If you’re coding to determine how accurate a single person would be if they made the ratings on their own, you’re interested in the reliability of a single rater. For example, in our Facebook study, we want to know both. First, we might ask “what is the reliability of our ratings?” Second, we might ask “if one person were to make these judgments from a Facebook profile, how accurate would that person be?” We add “,k” to the ICC rating when looking at means, or “,1” when looking at the reliability of single raters. For example, if you computed an ICC(2) with 8 raters, you’d be computing ICC(2,8). If you computed an ICC(1) with the same 16 raters for every case but were interested in a single rater, you’d still be computing ICC(2,1). For ICC(#,1), a large number of raters will produce a narrower confidence interval around your reliability estimate than a small number of raters, which is why you’d still want a large number of raters, if possible, when estimating ICC(#,1).

After you’ve determined which specificity you need, the third decision is to figure out whether you need a measure of absolute agreement or consistency. If you’ve studied correlation, you’re probably already familiar with this concept: if two variables are perfectly consistent, they don’t necessarily agree. For example, consider Variable 1 with values 1, 2, 3 and Variable 2 with values 7, 8, 9. Even though these scores are very different, the correlation between them is 1 – so they are highly consistent but don’t agree. If using a mean [ICC(#, k)], consistency is typically fine, especially for coding tasks, as mean differences between raters won’t affect subsequent analyses on that data. But if you are interested in determining the reliability for a single individual, you probably want to know how well that score will assess the real value.

Once you know what kind of ICC you want, it’s pretty easy in SPSS. First, create a dataset with columns representing raters (e.g. if you had 8 raters, you’d have 8 columns) and rows representing cases. You’ll need a complete dataset for each variable you are interested in. So if you wanted to assess the reliability for 8 raters on 50 cases across 10 variables being rated, you’d have 10 datasets containing 8 columns and 50 rows (400 cases per dataset, 4000 total points of data).

A special note for those of you using surveys: if you’re interested in the inter-rater reliability of a scale mean, compute ICC on that scale mean – not the individual items. For example, if you have a 10-item unidimensional scale, calculate the scale mean for each of your rater/target combinations first (i.e. one mean score per rater per ratee), and then use that scale mean as the target of your computation of ICC. Don’t worry about the inter-rater reliability of the individual items unless you are doing so as part of a scale development process, i.e. you are assessing scale reliability in a pilot sample in order to cut some items from your final scale, which you will later cross-validate in a second sample.

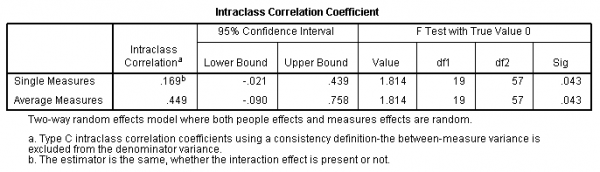

In each dataset, you then need to open the Analyze menu, select Scale, and click on Reliability Analysis. Move all of your rater variables to the right for analysis. Click Statistics and check Intraclass correlation coefficient at the bottom. Specify your model (One-Way Random, Two-Way Random, or Two-Way Mixed) and type (Consistency or Absolute Agreement). Click Continue and OK. You should end up with something like this:

In this example, I computed an ICC(2) with 4 raters across 20 ratees. You can find the ICC(2,1) in the first line – ICC(2,1) = .169. That means ICC(2, k), which in this case is ICC(2, 4) = .449. Therefore, 44.9% of the variance in the mean of these raters is “real”.

So here’s the summary of this whole process:

- Decide which category of ICC you need.

- Determine if you have consistent raters across all ratees (e.g. always 3 raters, and always the same 3 raters). If not, use ICC(1), which is “One-way Random” in SPSS.

- Determine if you have a population of raters. If yes, use ICC(3), which is “Two-Way Mixed” in SPSS.

- If you didn’t use ICC(1) or ICC(3), you need ICC(2), which assumes a sample of raters, and is “Two-Way Random” in SPSS.

- Determine which value you will ultimately use.

- If a single individual, you want ICC(#,1), which is “Single Measure” in SPSS.

- If the mean, you want ICC(#,k), which is “Average Measures” in SPSS.

- Determine which set of values you ultimately want the reliability for.

- If you want to use the subsequent values for other analyses, you probably want to assess consistency.

- If you want to know the reliability of individual scores, you probably want to assess absolute agreement.

- Run the analysis in SPSS.

- Analyze>Scale>Reliability Analysis.

- Select Statistics.

- Check “Intraclass correlation coefficient”.

- Make choices as you decided above.

- Click Continue.

- Click OK.

- Interpret output.

- Shrout, P., & Fleiss, J. (1979). Intraclass correlations: Uses in assessing rater reliability. Psychological Bulletin, 86 (2), 420-428 DOI: 10.1037/0033-2909.86.2.420 [↩]