Survey Provider and Sponsor Reputation Influence Survey Participation

![]() A recent study by Fang, Wen and Pavur1 investigated the extent to which the reputation of survey sponsors (e.g. corporations) and technology providers (e.g. SurveyMonkey) impact response rates. They discovered an interaction between the two and concluded, “A sponsoring corporation with a weak reputation who contracts with a survey provider having a strong reputation results in increased participation willingness from potential respondents if the identity of the sponsoring corporation is disguised in a survey.”

A recent study by Fang, Wen and Pavur1 investigated the extent to which the reputation of survey sponsors (e.g. corporations) and technology providers (e.g. SurveyMonkey) impact response rates. They discovered an interaction between the two and concluded, “A sponsoring corporation with a weak reputation who contracts with a survey provider having a strong reputation results in increased participation willingness from potential respondents if the identity of the sponsoring corporation is disguised in a survey.”

They discovered this with a series of 3 fairly small experiments (N=100, 100, 200) with Chinese participants. In each experiment, “strong reputation” providers were picked from well-known Chinese firms while the “weak reputation” providers were fictitious. So “strong” and “weak” might be better described as “having a strong reputation” versus “not having any reputation”.

In each experiment, participants were exposed to both strong and weak providers, so all results here are within-subject. The surveys were counterbalanced so as to neutralize order effects. Participants looked at several surveys and then rated their willingness to take each. So what did they do in each?

- Experiment 1 examined the effect of sponsoring program corporation reputation and found a small positive effect of corporate reputation (d = .17).

- Experiment 2 examined the effect of technology provider reputation and found a moderate positive effect of provider reputation (d = .30).

- Experiment 3 examined both simultaneously and found both main effects and an interaction between the two. When the sponsoring corporation reputation was weak, technology provider did not matter. When the sponsoring corporation was strong, a strong technology provider reputation was even more beneficial.

So as far as I can tell, the broad conclusion stated above was not actually tested anywhere. Instead, what we can safely conclude is that technology provider reputation is most important, while sponsor reputation also plays a role. A good reputation of both is further beneficial in comparison. As the study operationalized low reputation as “no reputation,” the effect may be even larger in comparison to institutions with poor reputations. And finally, it is unclear the extent to which a population of Chinese respondents is similar to those of any other nationality. In the United States, for example, corporate sponsorship might be met with more skepticism.

- Fang, J., Wen, C., & Pavur, R. (2012). Participation willingness in web surveys: Exploring effect of sponsoring corporation’s and survey provider’s reputation Cyberpsychology, Behavior, and Social Networking DOI: 10.1089/cyber.2011.0411 [↩]

![]() In recent article by Blackhurst, Congemi, Meyer and Schau1 in The Industrial-Organizational Psychologist, e-mail addresses from a group of 14,718 people who had applied for entry-level jobs in manufacturing were examined for their appropriateness. The researchers found that roughly 25% of e-mail addresses were inappropriate or antisocial, and that the level of inappropriateness predicted several qualities of interest to hiring managers: conscientiousness, professionalism, and work-related experience. Interestingly, cognitive ability was not related.

In recent article by Blackhurst, Congemi, Meyer and Schau1 in The Industrial-Organizational Psychologist, e-mail addresses from a group of 14,718 people who had applied for entry-level jobs in manufacturing were examined for their appropriateness. The researchers found that roughly 25% of e-mail addresses were inappropriate or antisocial, and that the level of inappropriateness predicted several qualities of interest to hiring managers: conscientiousness, professionalism, and work-related experience. Interestingly, cognitive ability was not related.

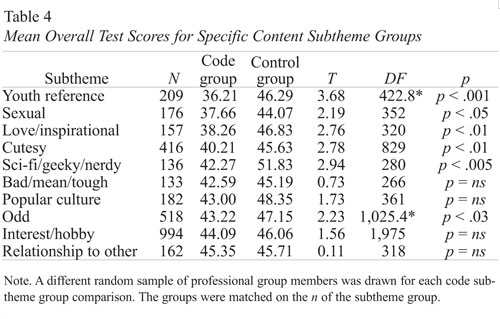

The types of e-mail addresses found appear in the table below, which were extracted by a team of 25 graduate students with high inter-rater reliability (a sub-sample of 1000 was used for this purpose).

The graduate students next categorized all 15,000ish e-mail addresses (600 addresses assigned to each of the 25 students). At the same time, the graduate student coded the e-mails as either “appropriate when applying to a job,” “questionable,” or “inappropriate when applying to a job.” Afterward, one of the researchers reviewed all 15,000, brought any questionable judgments to the attention of a 3-person panel for discussion. The researchers then compared mean scores on cognitive ability, conscientiousness, professionalism, and work-related experience across those with appropriate, questionable, and inappropriate e-mail addresses. Statistically significant differences were found on all dimensions.

Unfortunately, statistical significance is easy to attain in this sample. Even tiny effects will be statistically significant. The article did not report any standard deviations to give us a sense of effect sizes, so I had to do a little detective work. Here’s a table comparing outcomes for specific subtypes of inappropriate e-mail addresses:

This is the only table containing means, and fortunately, there are also degrees of freedom – that means we can reverse-engineer the t-test formula to estimate the standard deviation of this scale. It’s not perfect, but it’s the best available. Because these are independent-samples t-tests, t equals the mean difference (in this case, code group minus control group) divided by the pooled standard error (roughly s/SQRT(N)), and we can get the value of N by adding 2 to df (some of the numbers are a little odd in here – for example, DF should be equal to N*2-2, but it’s not – so this is my best guess). If we assume the SD of each group is equal, we can use the following formula to solve for s: s = sqrt(N)*(mean difference)/T. That produces SDs for each group here between 54.6 and 58.9, so if we assume these SDs hold up for the other scales, the differences on the predictors between appropriate and inappropriate e-mail addresses range from d=0.00 to d=0.11. So these are not by any stretch of the imagination big effects. But they are effects, about in line with what we’d expect from the intercorrelations between psychological predictors and performance generally.

The study is not without limitations; all of the measures provided were by a consulting firm, so we do not have any way to independently verify their content. The e-mail address ratings were also made by graduate students, and it is not clear how well their judgments would generalize to actual hiring managers. Actual hiring decisions made later were not available, nor was job performance data, so validation evidence is missing. All we really have is a new correlate of predictors.

Interestingly, the study also identified that roughly 5% of e-mail addresses contained information that looked like a date; considering legislation forbidding discrimination on the basis of age, the legality of hiring managers having access to this information is unclear. Although e-mail address appropriateness predicts characteristics of interest (and thus should potentially be included in a packet of information used for hiring), it may contain information itself inappropriate for a manager to see (and thus should not be included). Further research is needed to explore this further.

- Blackhurst, E., Congemi, P., Meyer, J. & Sachau, D. (2011). Should you hire BlazinWeedClown@Mail.Com? The Industrial/Organizational Psychologist, 49(2), 27-38. [↩]

A recent study by Information Solutions Group, sponsored by PopCap Games, led Gamasutra to claim:

A new study from PopCap Games finds that those who cheat while playing social games are nearly 3.5 times more likely to be dishonest in the real world than non-cheaters, with offenses ranging from cheating on taxes to illegally parking in handicapped spaces.

Although it doesn’t say so explicitly, that’s pretty obviously phased to lead you to believe that social cheating leads to real-life cheating. Since this was most likely a survey study, it seemed quite unlikely that they could make casual conclusions like that. So that led me to investigate the original study, which you can find for yourself here.

From that, it is clear that this was in fact a survey: a web-based presentation of 38 question to a sample that ultimately consisted of 801 US respondents and 400 UK respondents (total N=1201). The study specifically excluded anyone who played less than 15 minutes of social games per week. There’s no discussion of how many respondents completed the survey but were excluded, so it’s not very clear how well this survey would generalize to gamers in general (or any larger group).

The survey report starts by emphasizing the growing importance of social games by referencing another study that estimates 118.5 million social gamers in 2011 the US and UK, about a 17% increase from January 2010. There are a lot of social gamers; no surprise there. In PopCap’s study, they further identified that about 81 million play at least once per day, with 49 million playing more than once per day.

The report continued by exploring the profiles of current social gamers: mostly women (55%) with a mean age of 39 years old (down a little bit from last time). They play these games because they thinks it’s fun, competitive, stress relieving, and a mental workout. I’m curious exactly which social games are a mental workout (FarmVille?), but it was left unreported.

8% of respondents reported using hacks, bots, or cheats in a social game, with 11% saying they had considered it but had not actually tried it. That actually seems a bit high to me, and I wonder how their sample was located; they do not say. If their sample is loaded more toward (and bear with me here) “hardcore social gamers,” the rest of their results are a little less trustworthy. Without details on sampling, there’s no way to know.

Imagine my surprise when I reached the end of the report with absolutely no mention of the finding Gamasutra reports above. You are welcome to search for yourself (and if you find it, please let me know!), but after scanning through page by page, I searched the text for “cheat”, and for the specific percentages reported by Gamasutra. Nothing. So we are left to simply trust Gamasutra’s reporting with no verifiable source. That’s not that uncommon, but it is a bit suspicious when they point to a PDF report to provide support for their statement.

Without that support, there’s not much available to analyze, but we can at least say that the reporting above is a bit misleading. Here are several possible explanations for the reported cheating correlation, assuming it is accurate in the first place:

- People that cheat in social games are rewarded for doing so, and that leads them to cheat in real life.

- People that cheat in real life are rewarded for doing so, and that leads them to cheat in social games.

- People that self-report cheating in one category are more likely to self-report cheating in another category.

- There is an underlying psychological characteristic (e.g. integrity) that leads to cheating behaviors across situations.

As you can probably guess, the last two are more probable than the first two. Although it’s tempting to attribute causality here (much like in the debate on violence in video games causing violence), there is no evidence to suggest this – correlation is not causation. It is more likely that cheaters are cheaters, regardless of context. We’ve just found a new way to identify them.