Evaluating Organizational Training Success Improves Later Application by Employees

![]() In a recent study appearing in the International Journal of Training and Development, researchers Saks and Burke1 discovered that the frequencies of behavioral and results-based training evaluation were related to actual transfer of training material. Or in other words, organizations that evaluated behavior changes and monetary benefits resulting from training tended to have better results from that training. In their words:

In a recent study appearing in the International Journal of Training and Development, researchers Saks and Burke1 discovered that the frequencies of behavioral and results-based training evaluation were related to actual transfer of training material. Or in other words, organizations that evaluated behavior changes and monetary benefits resulting from training tended to have better results from that training. In their words:

Overall, the results of this study suggest a training evaluation-transfer of training paradox: organizations are most likely to evaluate trainee reactions and learning, and yet only behavior and results criteria are significantly related to higher rates of training transfer.

The authors investigated this by surveying 150 members of a T&D association in Canada. They asked respondents to identify the extent to which employees actually made good use of organizational training initiatives and respond about the degree to which their organization evaluated their training programs. Kirkpatrick’s 4-level training evaluation model (reactions, learning, behavior, and results) was used as a framework for these questions. On a scale of 1 (never) to 5 (always), consistent with prior studies, the researchers found that evaluation of trainee reactions was by far the most common (M=4.08), followed by learning (M=2.85), behavior (M=2.59) and results (M=2.22).

Frequency of training evaluation predicted immediate transfer and far transfer (6 months and 1 year later). Correlations generally decreased with later evaluations, as would be expected. When looking at specific types of evaluative criteria, only behavior and results were statistically significantly related to transfer variables, with moderate effects (r = .28 to .43). Relationships with reactions and learning were much lower (r = .02 to .18).

This indicates that organizations that evaluate behavior and results tend to have better transfer, at least as rated by those responsible for implementing those training programs. As a survey study, these results do not speak to causation. While the act of evaluating may improve transfer, organizations that evaluate may simply pay more attention to their training programs in general than organizations that do not evaluate. The researcher controlled for organizational age and the size of the firm, but this would not address this issue. More in depth consideration of the source of variance in transfer is needed. This study also relied solely on T&D professionals’ impressions of their organizations, which may not reflect actual transfer. Research with real organizational transfer data is needed to address this limitation.

- Saks, A., & Burke, L. (2012). An investigation into the relationship between training evaluation and the transfer of training International Journal of Training and Development DOI: 10.1111/j.1468-2419.2011.00397.x [↩]

![]()

![]() New research by Guillory and Hancock1 reveals that personal information provided on LinkedIn may contain fewer deceptions about prior work experience and prior work responsibilities than traditional resumes. However, LinkedIn profiles contain more deceptions about personal interests and hobbies. This researchers believe this may be because participants are equally motivated to deceive employers in both settings, but perceive lies about work experience on LinkedIn as more easily verifiable.

New research by Guillory and Hancock1 reveals that personal information provided on LinkedIn may contain fewer deceptions about prior work experience and prior work responsibilities than traditional resumes. However, LinkedIn profiles contain more deceptions about personal interests and hobbies. This researchers believe this may be because participants are equally motivated to deceive employers in both settings, but perceive lies about work experience on LinkedIn as more easily verifiable.

To explore this issue, the researchers conducted an experiment in which 119 undergraduates were randomly assigned to either create a resume, create a public LinkedIn profile, or create a private LinkedIn profile. None of the research participants reported having a LinkedIn profile already. The researchers then presented the undergraduates with a job advertisement and subjects in the resume condition told that the best resume would receive a $100 incentive. All participants then were interviewed and provided a worksheet to explain any ways they had been deceptive. The researchers explained that lying on resumes and job materials was common (which seems to be true – as many as 90% of job-seekers lie on resumes, according to one conference paper cited by the authors) so as to put research participants at ease when writing about their behaviors. This information was then coded by the researchers by type (a taxonomy they developed): information about responsibilities, abilities, involvement, and interests.

From the data, on average, participants lied 2.87 times, and the maximum was 8. The researchers then tested prevalence of lying in general and found that lies did not differ by condition. However, lies by category did differ by condition: more lies about responsibilities appear in traditional resumes, while more lies about interests appear in LinkedIn profiles. The researchers attribute these differences to differing goals in self-presentation given the constraints of the medium. When on LinkedIn, claims about professional accomplishments are perceived as more easily verifiable, so deceptive behavior shifts to something that can’t be easily verified (from specific responsibilities to interests).

The unbalanced monetary reward is a bit of a concern. We may simply be seeing an effect of one condition receiving cash for participation. This is somewhat mitigated by the fact that the participants really were creating LinkedIn profiles. That effort was not necessarily research-based; participants were actually trying to represent themselves for potential employers. But the extent to which they would have been incrementally motivated by cash in the LinkedIn conditions is unknown.

- Guillory, J., & Hancock, J. (2012). The Effect of Linkedin on Deception in Resumes Cyberpsychology, Behavior, and Social Networking DOI: 10.1089/cyber.2011.0389 [↩]

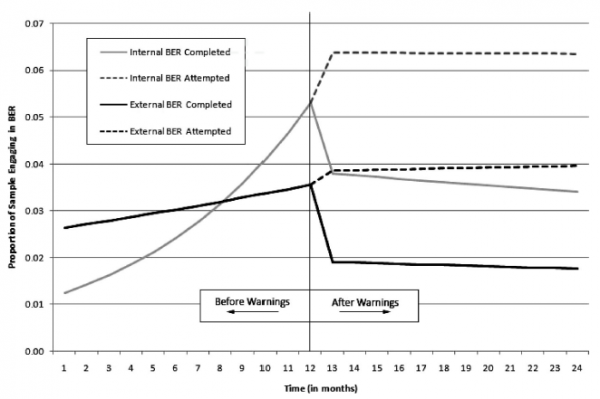

![]() A recent article by Landers, Sackett and Tuzsinki1 investigated the degree to which 32,311 managerial applicants at a nationwide retailer completed a personality test for promotion to or selection into the position. Up to 6% of the sample (nearly 2000 applicants) distorted their responses on the personality test by responding with only the extreme ends of the scale, a phenomenon the authors labeled “blatant extreme responding” (BER). The percentage of applicants responding with BER dropped about 2% after an interactive warning was implemented.

A recent article by Landers, Sackett and Tuzsinki1 investigated the degree to which 32,311 managerial applicants at a nationwide retailer completed a personality test for promotion to or selection into the position. Up to 6% of the sample (nearly 2000 applicants) distorted their responses on the personality test by responding with only the extreme ends of the scale, a phenomenon the authors labeled “blatant extreme responding” (BER). The percentage of applicants responding with BER dropped about 2% after an interactive warning was implemented.

The logic of the applicants’ response strategy is appreciated. On a multiple-choice personality survey, one approach to maximize your score might be to respond only “strongly agree” or “strongly disagree” – in effect, the extreme responses. By identifying which answer was “best,” an applicant could get the maximum possible score on such a test. It’s important to note though that not all tests are scored like this; there are many where BER would result in a very low score.

In this study, applicants were both internal (lower-level managers applying for promotions) and external (applicants from outside the organization). Among the internal applicants, a rumor spread among managers that BER would help them beat the test. This resulted in an increase in BER over the first 12 months that the test was available.

At the 12-month mark, the organization implemented an interactive warning – a process only possible in online testing. If BER was detected on the first page of the personality test, a warning popped up. This was successful in reducing the rate of BER at test completion (see the difference between the dashed and solid lines in the figure above).

So, the takehome? The authors describe a real-world setting in which applicants lied on a personality test. This lying was detectable, and warnings reduced the effect. If you’re implementing online personality testing, maybe you should use warnings too!

- Landers, R., Sackett, P., & Tuzinski, K. (2011). Retesting after initial failure, coaching rumors, and warnings against faking in online personality measures for selection. Journal of Applied Psychology, 96 (1), 202-210 DOI: 10.1037/a0020375 [↩]