Deconstructing the News Media to Learn Statistics

When I teach undergraduate statistics, I often include an assignment where I ask my students to take a specific chart or figure found in the news media and explain it rationally. Where did the numbers come from? What does it really mean? How much trust can we put in the accuracy of this figure? Is this figure deliberately trying to mislead you?

The especially sad part of this is that finding misleading figures for the assignment is not very difficult. The news media’s goal is to intrigue and entertain, and that often means that accuracy falls by the wayside.

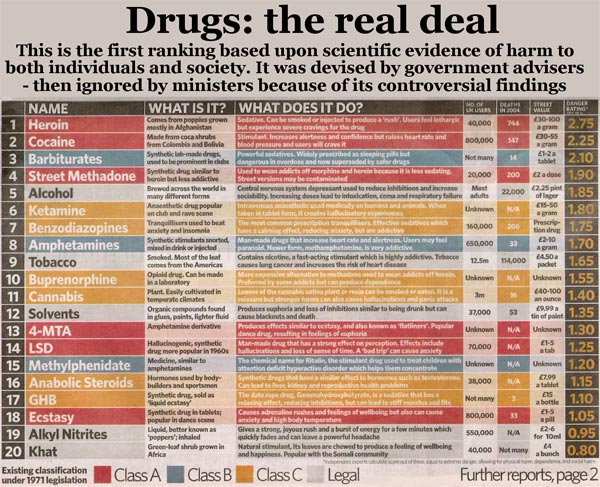

Today, one such figure jumped out at me. It’s not only from the news media, but it’s also been helpfully augmented by a reader to provide a social message:

I don’t necessarily disagree with the content of this chart, but with the silly way it’s presented in the graphic. The top says, “this is the first ranking based upon scientific evidence.” Well, maybe.

The very bottom of the chart gives some fine-print details. If you can’t see it above, click on the chart for a larger version, or read it here: “independent experts calculate score out of three: equal to extreme danger, allowing for physical harm, dependence, and social harm.” That provides little direction, and the poor grammar doesn’t help! What follows are the thoughts I have over the next 5-10 seconds after looking at this chart.

- We have no information on how many experts there are or where they came from. This could be anyone! The graph implicitly asks for us to trust the expertise of the people making the ratings, yet no credentials are provided.

- “equal to extreme danger, allowing for physical harm, dependence, and social harm” is vague, but it appears that extreme danger is the overall score, while physical harm, dependence, and social harm are the three dimensions of extreme danger. That’s a starting point for understanding what this table really tells us.

- We can try to make some educated guesses as to where the specific numbers came from. Since the scale of the “danger rating” is out of 3 (also written in very small print in the table header), the apparently three dimensions were likely three scores on a scale of 0 to 1 (or perhaps 0% to 100%), combined as a simple mean. Is that the best idea? That’s hard to say. Is social harm as important as physical harm? Is dependence as destructive as social harm? These are potentially assumptions made in this graph, which not everyone will agree with.

- There are not very many fine shades of deviation in the scale – everything is a multiple of 0.05. It’s not conclusive, but it strongly suggests that 20 values (or a multiple of 20v values) are going into these ratings. Why? Because 1/20 = 0.05. Combined with the mean-of-3-values theory above, this doesn’t make sense. If 5 people were providing 3 ratings each, our smallest distinction would be 1/(3*5) = 1/15 = .067. For 6 people making 3 ratings, 1/(6*3) = 1/18 = .056. For 7 people making 3 ratings, 1/(7*3) = 1/21 = .048. There is no combination of values, given a mean of 3 ratings, that would produce a difference of .05 exactly. So something’s off here.

- Some explanation may be provided by this observation – a huge group in the middle of the list (from #3 Barbiturates to #19 Alkyl Nitrates) differ by a small range – 0.05 or 0.10. And yet no two entries are the same number! That’s extremely suspicious – with such fine shades of deviation and what appears to be a small group of raters, we’d expect at least a little overlap. That suggests that either 1) this list was modified by the editor after it was formed or 2) the “experts” came to some sort of group agreement, casting even more doubt on the impartiality of the results.

- I generally don’t trust anything where the word “scientific evidence” is thrown around so casually. It’s not scientific because you say it is.

I will point out, however, that none of these points are conclusive. They are just the initial reactions I have to a poorly made graphic. These numbers may be valid, but we simply don’t have enough information to trust them.

What could have been done to improve the graphic? Easy – provide details on the methodology. Only a sentence or two might be required, even in another tiny footnote. But as it stands, this graphic practically tells us nothing.

| Previous Post: | The Lies That Data Tell |

| Next Post: | Even Virtual Attractiveness Changes How People Treat You |

I like the details of your teaching methods and would like to see more posts like this. I’m always interested in hearing about how others run their classrooms.

I couldn’t comment on your page of relevant I/O links so I figured I’d do it here, but I just wanted to say thanks for posting so many amazing resources and that I completely agree that we don’t have nearly enough I-O resources online… it’s odd to me that there isn’t a more centralized way to find Twitter and RSS feeds that are truly I-O (NOT HR, executive coaching that has little to do with any real “evidence” or general business and human capital-related)…

I also wanted to tell you that I’m the owner of Psychology Applied to Life (http://psychoflife.wordpress.com/) and that I’ve more or less moved over to my own domain…. and that I’m doing my best to be a more active blogger and look forward to reading more of your stuff!

It was odd to me too – that’s what led to the list! I’ve updated your web address on the I/O blogs list and have your RSS in my reader. Looking forward to seeing where you go with Psych at Work.

The graphic actually comes from a paper published in the Lancet. I believe it was the front page of the London Independent.

The paper itself is here: Nutt Development of a rational scale to assess the harm of drugs.pdf

Ahh, that is very informative, thank you. It appears my suspicion 5/#1 (“this list was modified by the editor”) is correct – the original paper uses much finer shades of distinction, which changes a lot of my original assumptions. The number of expert raters they used was still 8 to 16 though, depending on the entry – a lot better than 3, but still a fairly small number.

In my undergrad statistics classrooms, I’ve considered a few times looking at how papers are misrepresented in the media (rather than just statistics themselves), but because they don’t have a background in research methods at that point, ultimately concluded it muddied the overall message I wanted to get across.

Maybe in a methods course though…

Thank you so much for updating and adding me – I appreciate it and am very flattered! Let’s hope I can educate the masses about the amazing things I-O can do!

I just want to know how they calculate the “danger rating”. I’ve done most of these substances and I’m still around to comment on it.